Influence of Dataset Size on Subclass-Aware Decoding of Word ERPs

Brain-computer interface (BCI) protocols, which make use of an event-related potential protocol typically provide different external stimuli to users and record, if one of these stimuli was perceived as an attended target stimulus, while the other stimuli should have been ignored as so-called non-target stimuli. The machine learning methods used for classifiying target- from non-target ERP responses of the recorded brain signals like EEG have to solve a two-class problem, and most model classes indeed assume a Gaussian distribution of ERP features for both, the target and non-target class of stimuli. Depending on the experimental protocol, however, the non-target class is usually made up from multiple sub-classes while the target class indeed is homogeneous. An example is derived from a BCI-based spelling protocol, assuming sequential highlighting events of single word stimuli: the non-target class consists of all possible words that currently are ignored, i.e. not selected, while the target class consists of a single word only.

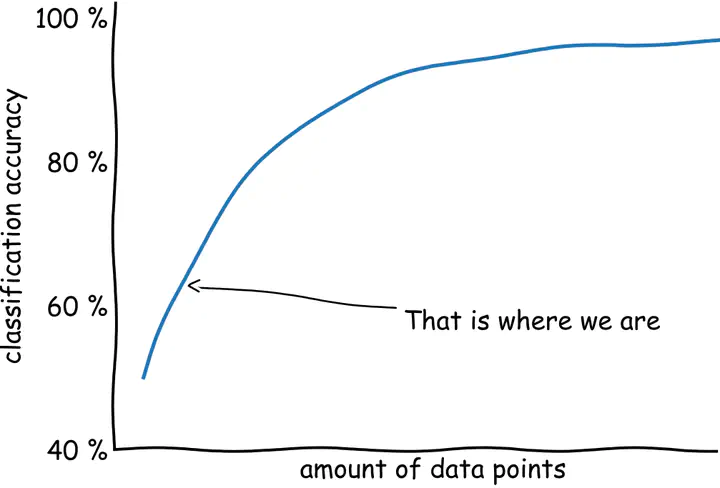

For a specifically heterogeneous visual ERP dataset the benefit of treating subclasses explicitly has been shown, but for other datasets the treatment of subclasses does not improve the decoding performance. However, it is hypothesized, that successful subclass treatment may depend on the size of the datasets, such that a subclass is not too small.

Task

Within this project, it shall be investigated, how large training data sets need to be, such that a benefit can be observed also for auditory ERP protocols like the one used for the rehabilitation training of stroke patients with aphasia [2].

[2] Sosulski, J., & Tangermann, M. (2022). Introducing block-Toeplitz covariance matrices to remaster linear discriminant analysis for event-related potential brain–computer interfaces. Journal of Neural Engineering, 19(6), 066001. https://doi.org/10.1088/1741-2552/ac9c98

Skills required

- BCI background by accomplished course SOW-BKI 323 or SOW-MKI 74

- Experience in ERP data analysis and signal processing

- Excellent knowledge of linear discriminant analysis

- Very strong programming skills in Python (familiarity with numpy, sklearn, MNE)

- Good mathematical background and intuition